RadarNet: Exploiting Radar for Robust Perception of Dynamic Objects

Abstract

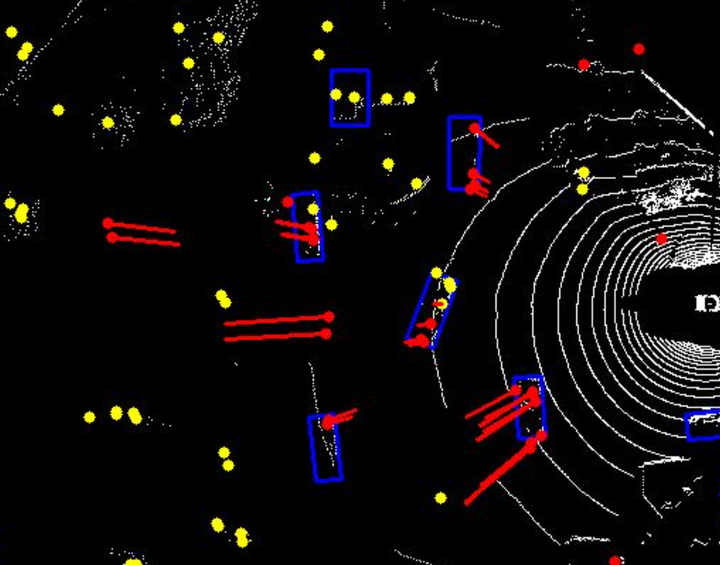

We tackle the problem of exploiting Radar for perception in the context of self-driving as Radar provides complementary information to other sensors such as LiDAR or cameras in the form of Doppler velocity. The main challenges of using Radar are the noise and measurement ambiguities which have been a struggle for existing simple input or output fusion methods. To better address this, we propose a new solution that exploits both LiDAR and Radar sensors for perception. Our approach, dubbed RadarNet, features a voxel-based early fusion and an attention-based late fusion, which learn from data to exploit both geometric and dynamic information of Radar data. RadarNet achieves state-of-the-art results on two large-scale real-world datasets in the tasks of object detection and velocity estimation. We further show that exploiting Radar improves the perception capabilities of detecting faraway objects and understanding the motion of dynamic objects.