Symmetric Part Detection

People

Relevant Publications

The code and data was developed as part of the following publications:

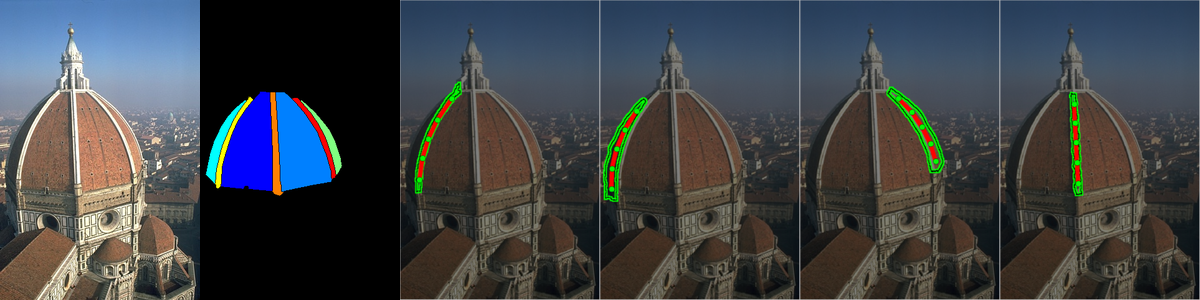

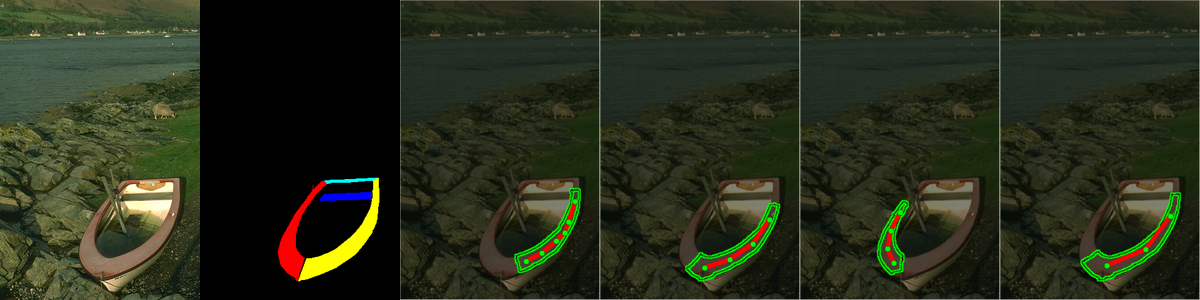

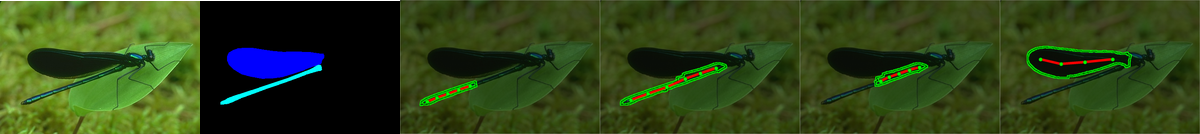

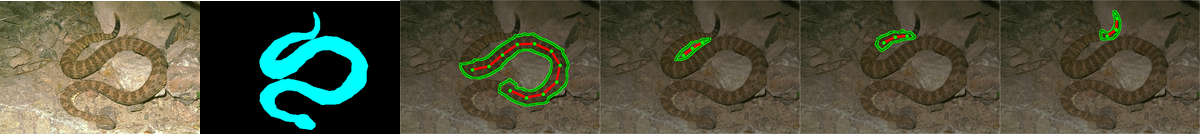

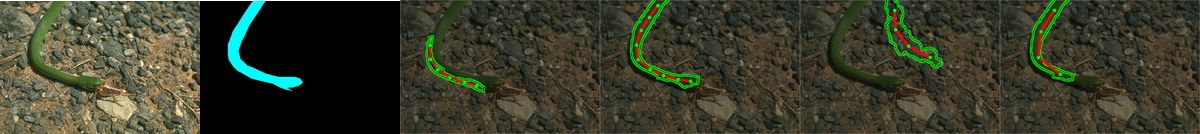

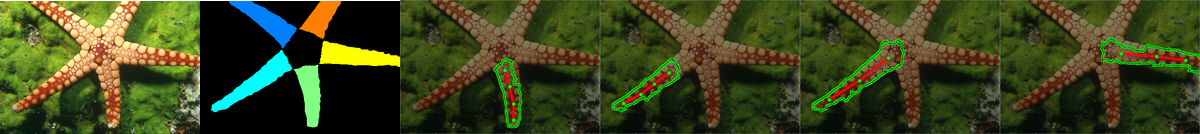

A Framework for Symmetric Part Detection in Cluttered Scenes,

T. Lee,

S. Fidler,

A. Levinshtein,

C. Sminchisescu, and

S. Dickinson,

arXiv preprint, 2015.

[paper]

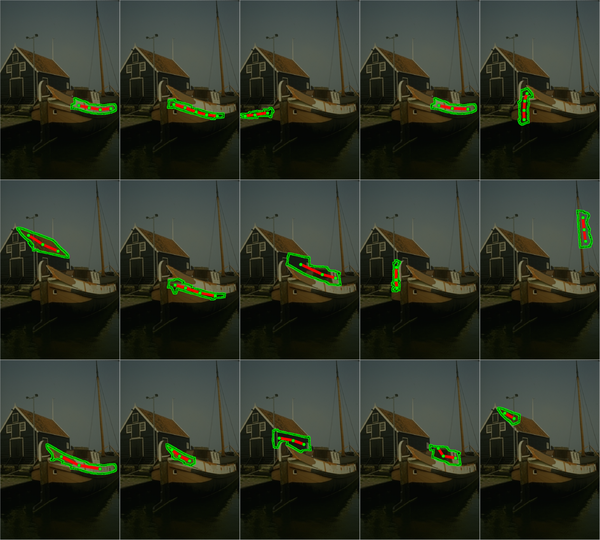

Detecting Curved Symmetry using a Deformable Disc Model,

T. Lee,

S. Fidler, and

S. Dickinson,

Proceedings, International Conference on Computer Vision (ICCV), 2013.

[paper,

poster,

code,

project page]

Multiscale Symmetric Part Detection and Grouping,

A. Levinshtein,

C. Sminchisescu, and

S. Dickinson,

Proceedings, International Conference on Computer Vision (ICCV), 2009.

[paper]

Code

- Detection code - SymmetricParts.tar.gz

- Evaluation code - SymmetricParts_evaluation.tar.gz

Data

BSDS-Parts consists of 36 images with multiple annotated masks per image corresponding to object parts.

- BSD-Parts with edges and superpixels - BSD-Parts.tar.gz

- with precomputed detections - BSD-Parts_detections.tar.gz

- WHD with edges and superpixels - WHD.tar.gz

- with precomputed detections - WHD_detections.tar.gz