Sat 06 Jan 2024 21:41

How smart is GPT-4 anyway?

I got some helpful feedback about my last blog article, which mentions ChatGPT-4 in the context of the 50th anniversary of Social Issues in Computing and its predictions about AI. Robert DiMarco pointed out that while a chatbot can respond as if it is reasoning, because it is essentially a black box where one can't look inside to see how it is coming up with its answer, one can't know for certain whether or not it is actually using reasoning to come up with the answer. He is right, of course. But the same holds for people too (we just know better how people generally do things, because we are people ourselves). So how might we check if a person is using reasoning? We might ask the person some questions, and look for responses that contain information that would generally require reasoning to deduce.

I tried asking such questions to ChatGPT-4. First I tried something from popular culture, in this case, "Star Wars":

Me: In "Star Wars", Darth Vader responds to an accusation of murder by Luke Skywalker. This response has become a famous movie line. What is the response?

ChatGPT-4: In "Star Wars," particularly in "The Empire Strikes Back," Darth Vader's famous response to Luke Skywalker's accusation is, "No, I am your father." This line is one of the most iconic and frequently quoted in film history, often misquoted as "Luke, I am your father."

Me: Is there another character who, making the same accusation, could elicit the same response?

ChatGPT-4: Yes, if Leia Organa, Luke Skywalker's twin sister, made the same accusation to Darth Vader, he could technically give the same response, "No, I am your father." This is because, as revealed in the Star Wars saga, Darth Vader, formerly known as Anakin Skywalker, is the father of both Luke and Leia.

Me: If Kylo Ren made the accusation, how might Vader modify his response?

ChatGPT-4: If Kylo Ren, who is Darth Vader's grandson, made a similar accusation, Darth Vader's response would likely be modified to reflect their relationship. He might say something like, "No, I am your grandfather." Kylo Ren, whose real name is Ben Solo, is the son of Leia Organa and Han Solo, making him the grandson of Darth Vader.

As people who know "Star Wars" will realize, ChatGPT-4's answers are correct. I chose this example because it requires understanding the quote well enough to know it is referring to a family relationship between characters, and it also requires knowledge about how family relationships work. ChatGPT-4's responses are no different from what an intelligent person who knows the Star Wars characters might answer, though in answer to the third question, the person might go on to point out, for instance, that Kylo Ren was born after Vader's death, making the conversation unlikely.

Then I thought I would ask GPT something about Star Wars that is perhaps a bit more unexpected, something that would be immediately obvious to a person (at least an english-speaking one), but not necessarily to a computer. So I asked this:

Me: If Obi-Wan Kenobi is a character in Star Wars, why is there no Obi-Two Kenobi? Why might someone ask this question?

ChatGPT-4: The question about "Obi-Two Kenobi" in the context of "Star Wars" is likely a playful or humorous one, playing on the word "One" in "Obi-Wan Kenobi's" name as if it implies a numerical sequence. In reality, "Obi-Wan" is a unique name in the "Star Wars" universe, not intended to suggest a numerical series. The names in "Star Wars" are often creative and unique, following the tradition of fantasy and science fiction naming conventions, rather than real-world naming patterns.

Someone might ask this question as a joke, riffing on the idea that if there's an "Obi-Wan" (sounding like "One"), there could humorously be an "Obi-Two," "Obi-Three," and so on. It's a bit of wordplay, often enjoyed by fans of the series who like to engage with the franchise in a light-hearted way.

This answer is correct too. So are these the sorts of answers you might expect from a human doing reasoning? I think Yes. So while I agree we cannot prove ChatGPT-4 is reasoning, it is giving responses to my questions similar to what a person doing reasoning would give.

Another helpful comment came from Allan Borodin, who pointed out that the test example I considered in my previous article, about the ball crashing through the table, may be a well-known example in the literature. Even if not, it has been available via the Internet for at least a decade, as it was described in detail by Hector Levesque in his 2013 article for the 40th anniversary Social Issues in Computing blog, which is the reason I used it. Allan wondered whether ChatGPT-4 had been trained on literature containing this example and had learned from it the appropriate response. This seemed to me to be a possibility worth checking. The thing to do, I thought, is to come up with something GPT-4 had most likely not seen in its training data, and see if its answers look like those a human doing reasoning might give. So I made up a new Levesque-like type of question and asked variants of it to ChatGPT-4:

Me: The balloon and the corn touched and it popped. What popped?

ChatGPT-4: The corn popped. In this scenario, when the balloon and the corn touched and something popped, it is more likely that the corn popped, as in turning into popcorn, rather than the balloon bursting. This interpretation assumes that the corn was heated to the point of popping, a common occurrence when making popcorn.

Me: The balloon and the hot metal pot touched and it popped. What popped?

ChatGPT-4: In this scenario, where the balloon and the hot metal pot touched and something popped, it is most likely that the balloon popped. Balloons are typically made of materials like latex or mylar, which are sensitive to heat. When a balloon comes into contact with a hot metal pot, the heat would cause the material to weaken and burst, resulting in the balloon popping.

Me: The corn and the hot metal pot touched and it popped. What popped?

ChatGPT-4: In this scenario, where the corn and the hot metal pot touched and something popped, it is most likely that the corn popped. When corn kernels are heated, especially in a hot metal pot, they eventually pop, turning into popcorn. This is a common method for cooking popcorn.

Me: The cold water and the hot metal pot touched and it popped. What popped?

ChatGPT-4: In the scenario where cold water and a hot metal pot touched and something popped, it's likely that the sound of popping or a sizzling noise was created by the interaction between the cold water and the hot pot. When cold water comes into contact with a very hot metal surface, it can rapidly boil and vaporize, creating a sizzling or popping sound. However, in this context, nothing physical "pops" in the way a balloon or corn kernel might; it's more about the sound produced by the sudden temperature change and steam generation.

Me: The cold water and the pressure cooker touched and it popped. What popped?

ChatGPT-4: In the scenario where cold water and a pressure cooker touched and something popped, it's most likely that the pressure cooker released pressure, creating a popping sound. Pressure cookers are designed to cook food under high pressure. When cold water is applied to the exterior of a hot pressure cooker (a method sometimes used to quickly reduce internal pressure), it can cause the pressure valve or seal to release steam rapidly, resulting in a popping or hissing noise. This is a common safety feature to prevent over-pressurization. The pop is not from the water or the cooker itself, but from the sudden release of steam and pressure.

Me: The balloon and the unlit flare touched and it popped. What popped?

ChatGPT-4: In the scenario where a balloon and an unlit flare touched and something popped, it is most likely that the balloon popped. Since the flare was unlit, it wouldn't provide a heat source or any other typical means to cause a pop. However, balloons can pop due to various reasons such as physical pressure, sharp edges, or even static electricity. The texture or some component of the flare might have been enough to puncture the balloon's surface, leading to it popping.

Me: What if the flare were lit?

ChatGPT-4: If the flare were lit and it touched the balloon, it would almost certainly be the balloon that popped. A lit flare produces intense heat and flames, and when it comes into contact with a balloon, the heat would cause the material of the balloon (typically latex or a similar substance) to weaken rapidly and burst. Balloons are very sensitive to heat, and the intense heat from a lit flare would easily be enough to cause a balloon to pop.

Me: The balloon and the weasel touched and it popped. What popped?

ChatGPT-4: In the scenario where a balloon and a weasel touched and something popped, it is likely that the balloon popped. This scenario might be referencing the expression "pop goes the weasel," which is a line from a well-known nursery rhyme. In real life, if a weasel (a small, active mammal) came into contact with a balloon, the balloon could easily be punctured and pop, especially if the weasel's claws or teeth made contact with it. The weasel itself wouldn't pop; it's the balloon that is vulnerable in this interaction.

Me: The balloon and the instawargia touched and it popped. What popped?

ChatGPT-4: "Instawargia" is not a recognized or common term in English, and it does not refer to a known object, concept, or entity. Because of this, it's challenging to determine what "instawargia" is and how it would interact with a balloon. However, given that balloons are generally fragile and prone to popping when in contact with unfamiliar or potentially sharp or pointed objects, it's reasonable to assume that in this scenario, the balloon is what popped. Without specific information about what "instawargia" is, this is a speculative conclusion based on the general nature of balloons.

This set of responses is interesting. Note that ChatGPT-4 gets the answer to the first question wrong. If corn and a balloon were to touch, and one or the other popped, most people realize this is much more likely to happen in a cornfield than a popcorn popper, where the balloon, not the corn, would be the thing that pops. Seeing this, I tried the same question with different types of things, for different definitions of "pop". I even tried making up a nonexistent thing (instawargia) to see what GPT would do with it, but the first question was the only one that ChatGPT-4 got wrong. Interestingly, its reasoning there wasn't completely incorrect: if corn were heated to the point of popping, it could pop if touched. But ChatGPT-4 misses the fact that if heat were present, as it surmises, the balloon would be even more likely to pop, as heat is a good way to pop balloons, and yet it points out this very thing in a later answer.

So what does this show? To me, I see a set of responses that if a human were to give them, would require reasoning. That one of the answers is wrong suggests to me only that the reasoning is not being done perfectly, not that there is no reasoning being done. So how smart is ChatGPT-4? It is clearly not a genius, but it appears to be as smart as many humans. That's usefully smart, and quite an achievement for a computer to date.

Sat 30 Dec 2023 10:13

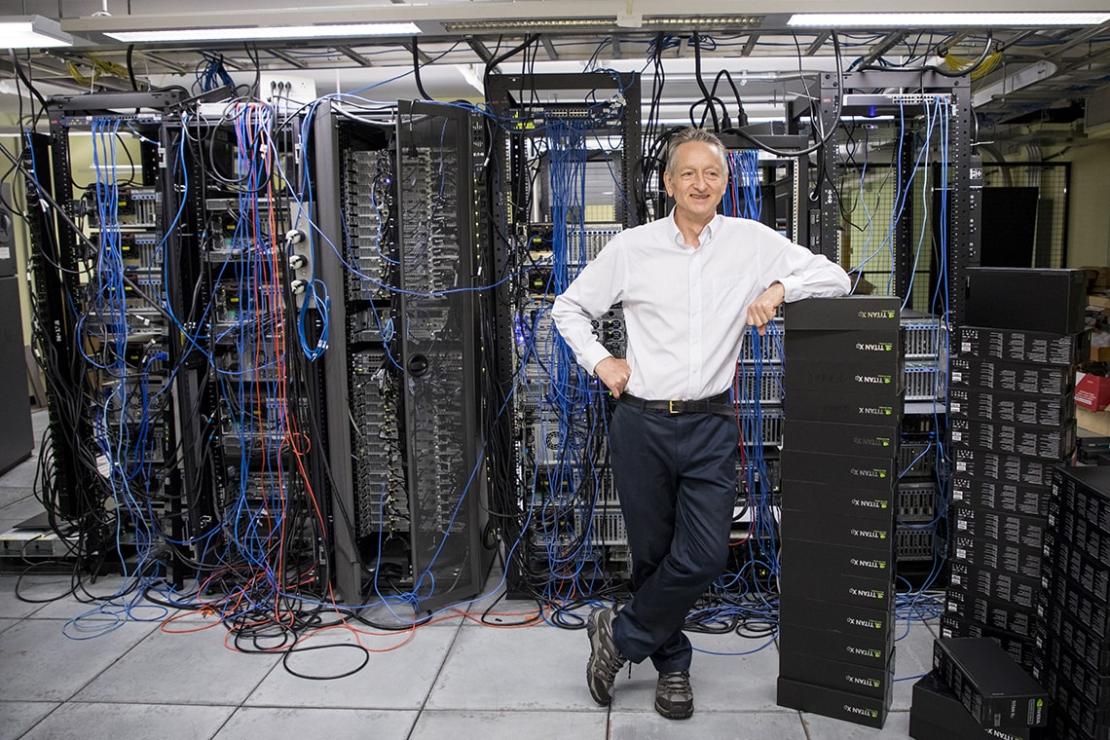

Fifty years of Social Issues in Computing, and the Impact of AI

From when I first discovered computers as a teen, I have been fascinated by the changes that computing is making in society. One of my intellectual mentors was the brilliant and generous C. C. "Kelly" Gotlieb, founder of the University of Toronto's Computer Science department, the man most instrumental in purchasing, installing and running Canada's first computer, and the author, with Allan Borodin, of what I believe is the very first textbook in the area of Computing and Society, the seminal 1973 book, Social Issues in Computing [Gotlieb, C.C., & A. Borodin, Social Issues in Computing. Academic Press, 1973]. Kelly was already a Professor Emeritus when I first came to know him, but was still teaching his beloved Computers & Society course, a course he taught for nearly two decades after his retirement. Kelly was a fascinating man, with a broad perspective and deep insight into things that seem confusing. Like a true expert, he knew what was important and what was incidental, and a few well chosen insights from him often served me well, helping me to make sense of complex issues. His book, Social Issues in Computing, still offers interesting, often prescient insights into Computing and Society even today, a half-century later. In honour of the importance of that book, for the 40th anniversary year, I set up a year-long blog, "Social Issues in Computing", which I edited. Throughout that year, top thinkers in the field contributed insightful articles on topics in Computers & Society, many of which are as relevant today as they were ten years ago. For this blog, I had the privilege of interviewing Kelly and Allan, the book's authors, and their insights, four decades on, were fascinating. Sadly, Kelly is no longer with us: he passed away in 2016, in his 96th year. But happily, Allan Borodin, his co-author, remains with us. Allan is a brilliant and insightful man, an active researcher and University Professor in the department. For the 50th anniversary of the book this year, Allan was interviewed by Krystle Hewitt. It is an articulate and insightful interview, well worth reading.

In the decade since, the social impact of computing has only accelerated, much of it due to things that happened here at the University of Toronto Computer Science department around the time of the 40th anniversary blog. I refer specifically to the rise of machine learning, in no small part due to the work of our faculty member Geoffrey Hinton and his doctoral students. The year before, Geoff and two of his students had written a groundbreaking research paper that constituted a breakthrough in image recognition, complete with working open-source software. In 2013, while we were writing the blog, their startup company, DNN Research, was acquired by Google, and Geoff went on to lead Google Brain, until he retired from Google in 2023. Ilya Sutskever, one of the two students, went on to lead the team at OpenAI that built the GPT models and the ChatGPT chatbot that stunned the world in 2022 and launched the Large Language Model AI revolution. In 2013, we already knew that Geoff's work would be transformational. I remember Kelly telling me he believed Geoff to be worthy of the Turing Award, the most prestigious award in Computer Science, and sure enough, Geoff won it in 2018. The social impact of AI is already considerable and it is only starting. The University of Toronto's Schwartz Reisman Institute for Technology and Society is dedicated to interdisciplinary research on the social impacts of AI, and Geoff Hinton himself is devoting his retirement to thinking about the implications of Artificial Intelligence for society and humanity in general.

It's interesting to look at what the book said about AI (it devotes 24 pages to the topic), what the 2013 blog said about AI, and what has happened since. The book was written in 1973, a half-decade after Stanley Kubrik's iconic 1968 movie, 2001: A Space Odyssey, which features HAL 9000, an intelligent computer, voiced by Douglas Rain. But computing at the time fell very far short of what Kubrik envisioned. Gotlieb & Borodin's position, five years later, on the feasibility of something like HAL 9000 was not optimistic:

In review, we have arrived at the following position. For problem solving and pattern recognition where intelligence, judgment and comprehensive knowledge are required, the results of even the best computer programs are far inferior to those achieved by humans (excepting cases where the task is a well-defined mathematical computation). Further, the differences between the mode of operation of computers and the modes in which humans operate (insofar as we can understand these latter) seem to be so great that for many tasks there is little or no prospect of achieving human performance within the foreseeable future. [p.159]But Gotlieb & Borodin, though implicitly dismissing the possibility of a HAL 9000, go on to say that "it is not possible to place bounds on how computers can be used even in the short term, because we must expect that the normal use of computers will be as a component of a [hu]man-machine combination. [pp.159-160]". Of this combination, they were not so willing to dismiss possibilities:

Whatever the shortcomings of computers now and in the future, we cannot take refuge in their limitations in potential. We must ask what we want to do with them and whether the purposes are socially desirable. Because once goals are agreed upon, the potentialities of [humans] using computers, though not unlimited, cannot be bounded in any way we can see now." [p.160]Fifty years later, social science research on how AI can benefit human work is focusing closely on this human-AI combination. A 2023 study of ChatGPT-4 by a team of social scientists studied work done by consultants assisted by, or not assisted by ChatGPT-4. Of their results, Ethan Mollick, one of the authors, explains that "of 18 different tasks selected to be realistic samples of the kinds of work done at an elite consulting company, consultants using ChatGPT-4 outperformed those who did not, by a lot. On every dimension. Every way we measured performance." [Mollick]. Evidently, Gotlieb & Borodin were correct when they wrote that the potential of the human-machine combination cannot so easily be bounded. We are only now beginning to see how unbounded it can be.

As for the possibility of a HAL 9000, as we saw, the book was not so sanguine. Neither was the 2013 40th anniversary blog. Hector Levesque, a leading AI researcher and contributor to the blog, wrote in his blog entry:

Levesque want on to outline the key scientific issue that at the time (2013) was yet to be solved:The general view of AI in 1973 was not so different from the one depicted in the movie "2001: A Space Odyssey", that is, that by the year 2001 or so, there would be computers intelligent enough to be able to converse naturally with people. Of course it did not turn out this way. Even now no computer can do this, and none are on the horizon.

Yet in the ten years since, this problem has been solved. Today, I posed Levesque's question to ChatGTP-4:However, it is useful to remember that this is an AI technology whose goal is not necessarily to understand the underpinnings of intelligent behaviour. Returning to English, for example, consider answering a question like this:

The ball crashed right through the table because it was made of styrofoam. What was made of styrofoam, the ball or the table?

Contrast that with this one:

The ball crashed right through the table because it was made of granite. What was made of granite, the ball or the table?

People (who know what styrofoam and granite are) can easily answer such questions, but it is far from clear how learning from big data would help. What seems to be at issue here is background knowledge: knowing some relevant properties of the materials in question, and being able to apply that knowledge to answer the question. Many other forms of intelligent behaviour seem to depend on background knowledge in just this way. But what is much less clear is how all this works: what it would take to make this type of knowledge processing work in a general way. At this point, forty years after the publication of the Gotlieb and Borodin book, the goal seems as elusive as ever. [Levesque]

Levesque can hardly be faulted for not knowing that his problem would be solved only a few years after he wrote what he did. While Hinton and his students' seminal work on image recognition had been done the year before, it was not yet clear that the ability to assign names to images based on their content would soon lead to machines being able to reason from context. A further invention was needed: transformers, invented at Google Brain in 2017. ("GPT-4" is an acronym: the "T" stands for "transformer".) Yet the book's implicit and Levesque's explicit pessimism on the possibility of a HAL 9000 now seems obsolete, and the near unbounded possibilities Gotlieb & Borodin envisioned for the human-machine combination apply also to autonomous machines. The impact of this on society will, no doubt, be significant. I expect the next fifty years of "social issues in computing" to be quite interesting. Mon 06 Nov 2023 16:48me: The ball crashed right through the table because it was made of styrofoam. What was made of styrofoam?

ChatGPT: The table was made of styrofoam, which is why the ball crashed through it.

me: The ball crashed right through the table because it was made of granite. What was made of granite?

ChatGPT: The ball was made of granite, which is why it crashed right through the table.

me: How did you know which thing was made of granite and which of styrofoam?

ChatGPT: I inferred the composition based on the context of the sentences. In the first sentence, "The ball crashed right through the table because it was made of styrofoam," the implication is that the table must have been weak or easily breakable for the ball to crash through it. Styrofoam is known for being light and not very durable, so it makes sense to conclude that the table was made of styrofoam.

In the second sentence, "The ball crashed right through the table because it was made of granite," the emphasis seems to be on the strength or force of the ball causing it to crash through the table. Granite is a very hard and heavy material, so it suggests that the ball was made of granite, giving it the necessary force to break through the table.

Research Computing at Computer Science Toronto and the Rise of AI

Photo by Johnny Guatto

Photo by Johnny Guatto

Until the beginning of 2009, the machine learning group used primarily Matlab on UNIX CPUs. In the 1990s, SGI and Sun multiprocessors were the dominant platforms. The whole department transitioned to x86 multiprocessor servers running Linux in the 2000's. In the late 2000s, Nivida invented CUDA, a way to use their GPUs for general-purpose computation rather than just graphics. By 2009, preliminary work elsewhere suggested that CUDA could be useful for machine learning, so we got our first Nvidia GPUs. First was a Tesla-brand server GPU, which at many thousands of dollars for a single GPU system was on the expensive side, which prevented us from buying many. But results were promising enough that we tried CUDA on Nvidia gaming GPUs - first the GTX 280 and 285 in 2009, then GTX 480 and 580 later. The fact that CUDA ran on gaming GPUs made it possible for us to buy multiple GPUs, rather than have researchers compete for time on scarce Tesla cards. Relu handled all the research computing for the ML group, sourcing GPUs and designing and building both workstation and server-class systems to hold them. Cooling was a real issue: GPUs, then and now, consume large amounts of power and run very hot, and Relu had to be quite creative with fans, airflow and power supplies to make everything work.

Happily, Relu's efforts were worth it: the move to GPUs resulted in 30x speedups for ML work in comparison to the multiprocessor CPUs of the time, and soon the entire group was doing machine learning on the GPU systems Relu built and ran for them. Their first major research breakthrough came quickly: in 2009, Hinton's student, George Dahl, demonstrated highly effective use of deep neural networks for acoustic speech recognition. But the general effectiveness of deep neural networks wasn't fully appreciated until 2012, when two of Hinton's students, Ilya Sutskever and Alex Krizhevsky, won the ImageNet Large Scale Visual Recognition Challenge using a deep neural network running on GTX 580 GPUs.

Geoff, Ilya and Alex' software won the ImageNet 2012 competition so convincingly that it created a furore in the AI research community. The software used was released as open source; it was called AlexNet after Alex Krizhevsky, its principal author. It allowed anyone with a suitable NVidia GPU to duplicate the results. Their work was described in a seminal 2012 paper, ImageNet Classification with Deep Convolutional Neural Networks. Geoff, Alex and Ilya's startup company, DNNresearch, was acquired by Google early the next year, and soon Google Translate and a number of other Google technologies were transformed by their machine learning techniques. Meanwhile, at the Imagenet competition, AlexNet remained undefeated for a remarkable three years, until it was finally beaten in 2015 by a research team from Microsoft Research Asia. Ilya left Google a few years after, to co-found OpenAI: as chief scientist there, Ilya leads the design of OpenAI's GPT and DALL-E models and related products, such as ChatGPT, that are highly impactful today.

Relu, in the meanwhile, while continuing to provide excellent research computing support for the AI group at our department, including Machine Learning, also spent a portion of his time from 2017 to 2022 designing and building the research computing infrastructure for the Vector Institute, an AI research institute in Toronto where Hinton serves as Chief Scientific Advisor. In addition to his support for the department's AI group, Relu continues to this day to provide computing support for Hinton's own ongoing AI research, including his Dec 2022 paper where he proposes a new Forward-Forward machine learning algorithm as an improved model for the way the human brain learns.

Wed 23 Nov 2022 10:31

Data Classification and Information Security Standards

Image by Gerd Altmann from Pixabay

Image by Gerd Altmann from Pixabay

Public data is data meant to be disclosed. It still needs some protection against being altered, deleted or defaced, but it does not need to be protected against disclosure. In contrast, private data is not meant to be disclosed to anyone other than those who are authorized to access it.

Private data varies in sensitivity. Some data is private only because it hasn't yet been made public. At a University, much research data is in this category. When the research is underway, data is not yet made public because the research has not yet been published, but it is destined for eventual publication. The same is true for much teaching material. While it is being worked on, it is not yet made public, but when it is are complete, it will be disclosed as part of the teaching process.

Other private data is much more sensitive. Identifiable personal information about living or recently deceased persons is a common case. At a university, some research may involve data like this, and most administration will involve personal information. Student grades and personnel records are all personal information, and some financial data too. Unless appropriate permission to disclose personal information has been granted by the people whose data it is, the university will have an obligation to maintain their privacy by ensuring that the information is not disclosed inappropriately. In Ontario, where the University of Toronto is located, privacy protection for personal information is defined and regulated by the Freedom of Information and Protection of Privacy Act (FIPPA).

Some private data is even more sensitive, such as patient medical records. In Ontario, such records are considered personal health information (PHI), which is regulated by the Personal Health Information Protection Act (PHIPA). PHIPA imposes some fairly significant requirements on the handling of PHI: for instance, it requires a detailed electronic audit log of all accesses to electronically stored PHI. The University of Toronto does significant amounts of teaching and research in areas of health, so it is worthwhile for the University to consider in general how it will handle such data.

For these reasons, the University defines four levels of data sensitivity as part of its Data Classification system. Level 4 is for highly sensitive data such as PHI as defined by PHIPA. Level 3 is for personal information as defined by FIPPA. Level 2 is for private data not classified at higher levels, and Level 1 is for public data.

This four-tier system roughly parallels the different types of computer systems that the University uses to handle data. Some systems, such as digital signage systems or public-facing web servers, are designed to disseminate public information (level 1). Other systems, suitable for up to level 2 data, exist mostly at the departmental level in support of academic activites such as research computing and/or the development of teaching materials. An astronomer may, for instance, analyze telescope data, a botanist may model nutrient flow in plant cells, a chemist may use software to visualize molecular bonds, while an economist may use broad financial indicators to calculate the strength of national economies. Still other systems, suitable for up to level 3 data, are used for administration, such as the processing of student records. These include smaller systems used, for example, by business officers in departmental units, as well as large institution-wide systems such as ROSI or AMS. Most general-purpose University systems used for data storage or messaging, such as the University's Microsoft 365 service, would typically be expected to hold some level 3 data, because personal information is quite widespread at a university. After all, a university educates students, and so various types of personal information about students are frequently part of the university's business. This is not normally the case, though, for level 4 data. Systems designed for level 4 data are much rarer at the University, and generally come into play only in situations where, for example, University research involves the health records of identifiable individuals. These systems will benefit from greater data security protection to address the greater risks associated with this sort of data.

A key advantage of the University's four levels of data classification is that the University can establish a Information Security Standard that is tiered accordingly. Systems designed to handle lower risk data (such as level 1 or 2) can be held to a less onerous and costly set of data security requirements, while systems designed to handle higher risk data (especially level 4) can be held to more protective, though more costly, requirements. The University's Information Security Standard is designed so that for each control (a system restriction or requirement), the University's standard indicates whether it is optional, recommended, or mandatory for systems handling a particular level of data. If a system is designed to handle data up to that level, the standard indicates both the set of controls to be considered, and whether or not those controls can, should, or must be adopted.

An obvious question here is what to do when someone puts data on a system that is of greater sensitivity (a higher data classification) than the system is designed to handle. Most likely, nobody will try to use a digital signage system to handle personnel records, but it is quite plausible that professors might find it convenient to use research computers, designed for level 2 data, to process student marks (level 3 data) in courses they are teaching. Similarly, someone handling medical records may wish to make use of the University's general-purpose Microsoft 365 service because of its convenience, but it is a service that is not designed for data of such sensitivity and may well not provide the detailed electronic audit log required by Ontario law. For this reason, clear communication and user training will be required. Handling data appropriately is everyone's responsibility. Training need not be complicated. It is not normally difficult to explain, or to understand, that one should not put patient medical records into email, for example, or use local research computers for personnel records or student marks. For people handling the most sensitive types of data (level 4), more training will be needed, but the number of people at the University who need to handle such data regularly are comparatively few.

The underlying motivation for the University's approach is to protect riskier data with greater, more costly, protections, without having to pay the costs of applying those protections everywhere. The university's resources are thus being applied strategically, deploying them where they matter most, but not in places where the risk does not warrant the expense. This approach is not meant to preclude additional protections where they make sense. If there are risks of academic or industrial espionage, for example, or some other risk beyond the classification of the data being used, one may choose to impose more restrictions on a system than the university's Information Security Standard may require. But the general principle remains: the riskiness of the data on a system should guide and inform what needs to be done to protect it.

Wed 17 Aug 2022 10:54

Innovation vs Control: Finding the Right Balance for Computing

Some organizations address this conflict by freely choosing control over innovation, turning it into a competitive advantage. Consider Starbucks, Tim Hortons, McDonalds: these are all large companies whose competitive advantage is critically dependent on the consistent implementation of a central vision across a multitude of disparate locations, many that are managed by franchise partners. Essentially all of the organization's computing is focused on this mission of consistency. And it works. Who hasn't travelled with small children in a car on a road trip, and after many hours on the road, spotted, with some relief, a McDonalds or a Tim Hortons en route? The relief is in the fact that even when travelling in a strange place, here is a familiar restaurant where you know what to expect from the food, where things will be much the same as the Tim Hortons or the McDonalds near home.

Other organizations have no choice about where they stand on the matter. For the modern bank, computers, rather than vaults, are where wealth is stored and managed. Whether they want to innovate or not, banks cannot risk the use of computing that is not fully controlled, audited, and de-risked. The same holds in general for most financial institutions, where the constant efforts, sometimes successful, of would-be thieves to exploit computers to gain unauthorized access to wealth, make it unreasonably risky for a financial organization's computers to be anything but fully locked down and fully controlled. Even non-financial institutions, when sufficiently large, will often have substantial financial computing activity because of the size and scale of their operations: this computing, too, needs to be properly controlled, protected and audited.

Yet other organizations are forced into the opposite extreme. Start-up companies can be severely resource-constrained, making it difficult for those companies to make the sort of investments in highly controlled computing that financial institutions are capable of making. For start-ups innovating in the computing space, such as tech start-ups, they may not be able to consider the possibility. Highly controlled computer systems can have very restrictive designs, and when these restrictions hinder the innovation needed to implement the company's product, it will have no choice but to pursue some other form of computing. After all, the company rises or falls on the success of its innovation. That is not to say that controlled enterprise computing is unimportant for such companies: quite the contrary. The success of a start-up is highly dependent on a viable ecosystem that provides known pathways to innovation while still moving towards operating in a suitably controlled, production-ready way that is necessary for any successful business. But for a technology start-up, enterprise computing can never come at the expense of technological innovation. The basic existence of the start-up company depends on its ability to innovate: without innovation, there can be no company. In general, this truth will hold in some form for any technology company, even well beyond the start-up stage.

The tension between innovation and control comes to the fore in a different way at research-intensive universities, which are large organizations with complex missions that need enterprise computing to carry out their task of educating students on a broad scale, but are also organizations committed to research, an activity that is, by its very nature, an exploration into things not yet fully understood. This conflict is particularly acute in units within such universities that do research into computing itself, such as computer science and computer engineering departments, because in such places, the computer must serve both as the locus of research and experimentation in addition to being a tool for implementing institutional and departmental processes and the exercise of legitimate control.

I've had the privilege of working in such a department, Computer Science, at such a university (the University of Toronto) for more than three decades now, most of that time in a computing leadership role, and I know this tension all too well. It is sometimes exhausting, but at the same time, it can also be a source of creative energy: yes, it is a barrier, like a mountain athwart your path, but also, as a mountain to a mountain-climber, a challenge to be overcome with determination, planning, insight, and endurance. This challenge can be successfully overcome at a good university, because in addition to a typical large organization's commitment to basic values such as accountability, equity, reliability and security, the university is equally committed to fundamental academic values such as creativity, innovation and excellence. I look for ways to achieve both. Over the years, I have had some successes. My department has produced some groundbreaking research using academic computing that my technical staff have been able to provide, and the department has been able to operate (and successfully interoperate) in good cooperation with enterprise computing at the divisional level, and with the central university as well.

Yet I believe even more is possible. I have lived the tension in both directions: to our researchers I at times have had to play the regulator, having to impose constraints on computing to try to ensure acceptable reliability, accountability and security. To our central university computing organizations, I at times have had to advocate for looser controls to create more room to innovate, sometimes in opposition to proposals intended to increase reliability, security and accountability. When things went badly, it was because one side or the other decided that the other's concern is not their problem, and tried to force or sidestep the issue. But when things went well, and most often it has, it is because both sides genuinely recognized that at a research-intensive institution, everyone needs to work within the tension between the need to innovate and the need to regulate. As a body needs both a skeleton and flesh, so too does a research university need both regulation and innovation: without one, it collapses into a puddle of jelly; without the other, into a heap of dry bones.

With both being needed, one challenge to overcome is the fact that those responsible for enterprise computing cannot be the same people responsible for innovative research computing, and that is necessarily so. The skill-sets vary, the domains vary, the user-base is quite different, and the scale varies. If the university were to entrust both computing innovation for computer science or computer engineering to the same groups that provide enterprise computing for an entire large university, one of two things would happen. Either the control necessary for a large enterprise would be diminished in order to make room for innovation, or, more likely, innovation would be stifled because of the need to create sufficiently controlled enterprise computing at a suitable scale for the entire university. Thus, necessarily, those who support unit research computing, where the innovation takes place, will be different people from those who support enterprise computing. But that can be a strength, not a weakness. Rather than see each other as rivals, the two groups can partner, embracing the tension by recognizing each others' expertise and each recognizing the others' importance for the University as a whole. Partnership brings many potential benefits: if innovation becomes needed in new areas, for example, when the rise of data science increasingly drives computing innovation outside of the traditional computer science and computer engineering domains, the partnership can be there to support it. Similarly, as the computing landscape shifts, and new controls and new regulation becomes needed to address, for example, emergent threats in information security, the partnership can be there to support it. There is no organization potentially better suited for such a partnership than a large research university, which, unlike a financial institution, is profoundly committed to research and innovation through its academic mission, but also, unlike a start-up, is a large and complex institution with deep and longstanding responsibilities to its students, faculty and community, obligated to carry out the enterprise computing mission of accountability, reliability and security.

So what might a partnership look like? It can take a number of different forms, but in my view, whatever form it takes, it should have three key characteristics:

Locality means that the computing people responsible for research computing must stay close to the researchers who are innovating. This is necessary for strictly practical reasons: all the good will in the world is not enough to make up for a lack of knowledge of what is needed most by researchers at a particular time. For example, deep learning is the dominant approach in Artificial Intelligence today because a few years ago, our technical staff who supported research computing worked very closely with researchers who were pursing deep learning research, customizing the computing as necessary to meet the research needs. This not only meant that we turned graphics cards into computation engines at a time when this was not at all common and not yet up to enterprise standards of reliability, it even means that at one point we set up a research computer in a researcher's bedroom so that he could personally watch over a key computing job running day and night for the better part of a week. While this sort of customizability is not always needed, and sometimes is not even possible (one could never run a large computer centre this way), being able to do it if necessary is a key research asset. A university will never be able to fully support research computing solely from a central vantage-point. A commitment to ensuring local presence and support of research computing operating at the researcher level is necessary.

Respectful Listening means that the computing people responsible for research computing at the unit level where research actually happens, and the people responsible for enterprise computing divisionally and centrally must communicate frequently, with an up-front commitment to hear what the other is saying and take it into account. When problems arise, respectful listening means that those problems will not be "solved" by simply overruling or ignoring the other, to pursue a simplistic solution that suits only one side. It also means a profound commitment to stepping away from traditional organizational authority structures: just because the innovative computing is situated in a department and the enterprise computing is lead from the centre should not mean the centre should force its view on the department, just because it can. Similarly, just because unit research computing is driven by research faculty who enjoy substantial autonomy and academic freedom, their research computing group at the unit level should not simply ignore or sidestep what the enterprise is saying, just because it can. Rather, both sides need to respect the other, listening to, not disregarding, the other.

Practical Collaboration means that enterprise computing and unit research computing need to work together in a collaborative way that respects and reflects the timelines and resource constraints of each side. Centrally offered computing facilities should support and empower research where they can, but in a practical way: it may not be possible to make a central facility so flexible and customizable that all research can be pursued. It is acceptable to capture some research needs without feeling an obligation to support the entire "long tail" of increasingly customized research projects. Unit research computing will need to recognize that the need to scale a centralized computing service may constrain the amount of customizability that may be possible. Similarly, unit research computing should use, rather than duplicate, central services where it makes sense, and run its own services where that makes sense. Both central and unit research computing should recognize that there is a legitimate middle ground where some duplication of services is going to occur: sometimes the effort required to integrate a large scalable central service into a smaller customizable research service is too great, and sometimes the research advantages of having a locally-run standardized service on which experiments can more easily be built, can more than outweigh any sort of economies of scale that getting rid of the unit service in favour of a central service could theoretically provide. Hence the collaboration must be practical: rather than slavishly pursue principles, it must be realistic, grounded, balanced, sensible. It should recognize that one size does not always fit all, and responsibly and collaboratively allocate resources in order to preserve the good of the research mission.

It is that research mission, the ability to innovate, that can make computing so transformative at a research university. Yet while innovative computing can indeed produce transformative change, it cannot be any change, and not at any cost. Computing is a change agent, yes, but it is also a critical component in the maintenance of an organization's commitment to reliability, accountability, equity, and good operation. Success is found in the maintenance of a suitable balance between the need to innovate and the need to control. When an organization critically depends on both factors, as a research university invariably does, I believe collaborative partnerships between respective computing groups is the best way to maintain the balance necessary for success.

Thu 31 Dec 2020 22:57

What's Wrong With Passwords on the Internet Anyway?

Put in simple terms, a login and password is what a system relies on to know who is who. Your password is secret: only you know what it is, and the system has some way of checking that it is correct. If someone connects to the system with your login and password, the system checks that the password is the right one for your login. If it is, the system concludes that you are the person trying to connect, and lets you in. If you are the only one who knows the password, this approach works, since you are the only person who can provide the correct password. But if criminals know your password too, and use it, the system will think the criminals are you, and will give them access to your account and all your data. The only way to fix this is to change your password to something new that only you know, but by then the damage may well be done.

Unfortunately, criminals have a pretty effective technique for finding out your login and password: they trick you into telling it to them. "Wait a minute!", you might say, "I won't ever tell a criminal my password. I don't even tell my family my password!" But you tell the system your password every time you log in. So if criminals set up a fake system that looks like the real one, and trick you into trying it, when you tell their fake system your password, the criminals will learn what it is.

This was not a common problem in the past, because it was difficult for criminals to successfully set up fake systems that look convincing. But on the Internet today, it is easy to set up a web site that looks like another site. The only thing that's hard to fake is the first part of the link, the hostname section that comes immediately after the double slash (//) and before the first single slash (/), because that part of the link is used to direct the request to the right system on the Internet. But given that the Internet is available in hundreds of countries, each with its own set of internet service providers, it is often not too difficult for criminals to find somewhere on the Internet where they can register a hostname that is similar-looking to the real thing.

Worse, the rise of messages containing embedded links make it very easy for criminals to send a fake message (e.g. an email or text) with a link that seems legitimate but really directs you to a fake site. This is called "phishing". Because of the way the web's markup language ( HTML) works, it is easy to set up a link that seems to point to one site, but actually points to another. For example, https://www.walmart.com is a link that seems to point to Walmart but really points to Amazon. Most web browsers will let you "hover" over a link to see where it really goes. But do people check every link carefully each time they use it?

The problem is made worse by the proliferation of legitimate messages with embedded links to all sorts of cloud services. I recently saw a message from a large organization to its staff, about their pensions. The message contained links to an external site whose name had no resemblance to the organization's name. The message invited the staff to click on those links to see information about their pensions. The message was legitimate: the organization had contracted with an external cloud provider to provide an online pension calculator for staff. But the message said nothing about the cloud provider: it merely contained a link to the calculator. If criminals had sent a similar message containing a malicious link to a fake system somewhere on the Internet, one that prompted staff to enter their login and password, no doubt many staff would have thought it legitimate. How could staff be expected to be able to tell the difference?

A good way to combat the password capturing problem is to require more than just a password to use a system. This is called "two-factor" or "multi-factor" authentication. Your password is one factor, and something else is a second factor, and you must provide both factors to prove to the system that it is you. This helps because the criminals must have both your password and your second factor in order to access your account and data. To ease the authentication burden for users, systems can ask for two factors only sometimes, such as when logging in for the first time in a while, or logging in from a new machine or a new location.

Ideally the second factor should be something that is hard for criminals to capture and use. One problem with a password is that it is a secret that can be used from anywhere on the Internet. With almost 60% of the world's population on the Internet, which now reaches every country in the world, the Internet can hardly be considered a "safe place". A second password, as easily used from anywhere on the Internet as the first, would not be much of an improvement. Worse would be the answers to some personal question about yourself, such as your mother's maiden name or the name of your first school: not only is such information just as easily used as a password, it is information that people may be able to find out in various ways. Answers to personal questions, while sometimes used for authentication, typically do not make a good second factor.

A better second factor is a message sent via a communication channel that goes only to you: for example, an email to your email address, or a text to your cell phone number. When you attempt to log in, the system sends a unique one-time code to you through that channel, and asks you to enter it. The assumption is that criminals won't have access to your email or your cell number, so they won't know and be able to enter the one-time code that the system sent to you. This is usually a good assumption. But criminals can try to get access to your email or your phone number, and sometimes they succeed. For example, in the case of a cell number, one thing they could try is to call your cell phone provider, tell them they are you and that your phone has been stolen, and request that your phone number be transferred to their new phone.

Another second factor, one even better, is a physical device in your possession. This could be a hardware security token that you plug into your computer or that displays a unique, frequently changing, code. Or it could be an app on your cell phone that is tied to your unique device. A physical device is an excellent second factor, because most criminals on the Internet are physically distant. To successfully pretend to be you, a criminal would need direct physical access to a device that would likely be located in your purse or pocket.

Relying on a device in purse or pocket as well as a password in your head is an improvement in security, but it has its drawbacks. It makes that device essential for you to use the system: if it is broken, lost or stolen, you're locked out, even if you know the password. While locking out people who don't have the device is exactly the point, that doesn't help when it is keeping you from legitimately using the system. Moreover, if that device is your smartphone, it changes your phone from a convenience to a necessity. While a smartphone has become a necessity already to some, it is a potentially consequential thing for it to become a requirement for everyone. A hybrid approach is perhaps best: hardware security tokens those who prefer it, a smartphone for those who for their own reasons carry one around anyway, and for many, both: a smartphone for convenience, with a hardware security token as backup, in case of smartphone loss or damage.

Perhaps there is an even more secure option? What if your second factor wasn't a device, but an actual physical part of your body, such as a finger (for a fingerprint), eye (for a retinal scan), face, or even heartbeat (as measured by e.g. a Nymi Band)? Would that be better still? After all, if it is hard for a criminal to get access to someone's things without being noticed, it is even harder to get access to someone's body. This is indeed possible: a technique called "biometrics, and it can be an effective second factor. Unfortunately there are a couple of issues with biometrics. For example, injuries or health issues can change your body; a cut on your finger may affect your fingerprint, for instance. Secondly, biometrics have a "revocation" problem. This comes from the fact that a biometric is a unique measurement of your body part: a fingerprint, retinal scan, facial image, or ECG. But measurements are data, and biometric data, like any other data, can and has been breached. If this happens, what will you do? Passwords can be changed, hardware security tokens can be replaced, but how are you going to change your fingerprint, your face, your eye, your heartbeat? While biometrics do have a place in authentication, most commonly to unlock a local device such as a smartphone or a laptop, the lack of revocability make biometrics less suitable as a second factor for Internet-accessible services.

Regardless of what is chosen for a second factor, the inconvenience of using more than one factor is something that has to be considered. Passwords, especially ones that are easy to remember, are quite convenient. Requiring more than this can make authentication more difficult. If becomes too difficult, the difficulty becomes a disincentive to use the system. For systems protecting highly sensitive data, some difficulty may be warranted, given the risk. For lower-risk systems, things are less clear. Yet for Internet-accessible systems, due to the prevalence of phishing, something more secure than just passwords seems increasingly necessary. I think Bill Gates is right: like it or not, the traditional password will become increasingly rare on the Internet, for good reason.

Mon 24 Feb 2020 10:19

Some Clarity on Public Cloud Cybersecurity

Some argue yes. Eplexity calls cloud computing "an established best practice for businesses" and claims "your data is typically safer in the public cloud than in an on-premises data centre". In 2016, Sara Patrick of Clutch, guest-writing for Tripwire.com, claimed to have "four reasons why the Cloud is more secure than Legacy Systems" In 2017, Quentin Hardy of the New York Times claimed that cloud data is "probably more secure than conventionally stored data." In 2018, David Linthicum, writing for InfoWorld, claimed "your information is actually safer in the cloud than it is in your own data centre".

One reason given for the claim is that public cloud providers offer greater

technical expertise than what is possible on-premise. Eplexity writes:

Unless your company is already in the business of IT security,

spending time and effort on securing your on-premises data distracts

from your core functions. Most organizations likely don't have a

robust, experienced team of cybersecurity professionals at their

disposal to properly protect their on-premises data.

... As such, cloud providers may employ hundreds or thousands of

developers and IT professionals.

This is an argument from size and scale. Cloud providers are bigger than you,

and have arguably more IT expertise than you, so they can do a better job

than you. But sadly, size and IT expertise is no guarantee of security. Yahoo

was a large Internet company, valued at one time at $125 billion.

It employed thousands of developers and IT professionals. Yet it was subject

to a cybersecurity breach of three billion user accounts in 2013/14; the

breach was not disclosed until the fall of 2016, and the full impact was

not known until october 2017. The damage to Yahoo's business was significant:

Verizon acquired Yahoo in 2017 for less than $5 billion, a deal that was

nearly derailed by the disclosure of the breaches.

I think we must conclude from the Yahoo story that size and expertise alone is no guarantee of cybersecurity. Naturally, major cloud providers like Amazon, Microsoft and Google are aware of the Yahoo situation and its consequences. No doubt it illustrated for them the negative impact that a major breach would have on their business. I cannot imagine that they would take the threat lightly.

Yet there have been close calls. Microsoft, a major cloud provider,

in December 2019 accidentally disclosed to the world a cloud database

on Azure with 250 million entries of customer support data. Happily,

a security researcher spotted and reported it, and Microsoft fixed it soon after. Moreover, Zak

Doffman, writing for Forbes, reported in Jan 2020 that Check Point Software

Technologies, a cybersecurity vendor, had discovered in 2019 a serious flaw

in Microsoft Azure's infrastructure that allowed users of the service to

access other users' data. While Check Point reported it immediately to

Microsoft, who fixed it quickly, had the flaw been discovered by criminals

instead of cybersecurity researchers, a great many things running on Azure

could have been compromised. Doffman quotes Yaniv Balmas of Check Point:

...the take away here is that the big cloud concept of security

free from vulnerabilities is wrong. That's what we showed. It can

happen there as well. It's just software and software has bugs. The

fact I can then control the infrastructure gives me unlimited power.

In the Check Point research article describing the flaw, Balmas concludes:

The cloud is not a magical place. Although it is considered safe, it

is ultimately an infrastructure that consists of code that can have

vulnerabilities - just as we demonstrated in this article.

What, then, is the right answer? Well, there isn't one. Neither public

cloud or on-premise datacentres are magic, neither are "safe". Cybersecurity

is a challenge that has to be met, no matter where the service is, or what

infrastructure it is using. Happily, this is finally being

recognized. Even Gartner Research, a long-time proponent

of the public cloud, predicting

as recently as mid-2019 that public

cloud infrastructure as a service (IaaS) workloads will suffer at least

60% fewer security incidents than those in traditional data centers, has recently taken a more nuanced view.

In the fall of 2019, this prediction of fewer security incidents in the cloud disappeared from Gartner's website,

and was replaced by this:

Through 2024, the majority of

enterprises will continue to struggle with appropriately measuring cloud

security risks.

Questions around the security of public cloud

services are valid, but overestimating cloud risks can result in missed

opportunities. Yet, while enterprises tended to overestimate cloud risk

in the past, there's been a recent shift - many organizations are now

underestimating cloud risks. This can prove just as detrimental, if not

more so, than an overestimation of risk. A well-designed risk management

strategy, aligned with the overarching cloud strategy, can help organizations

determine where public cloud use makes sense and what actions can be taken

to reduce risk exposure.

So does "public cloud use make sense"? Yes, of course it does, for a great many things. But it's not because the public cloud is intrinsicly more secure. The public cloud has its own set of cybersecurity issues. There is no "free pass". As always, carefully assess your risks and make an informed decision.

Fri 24 Jan 2020 20:02

Does AI Help or Hinder Cybersecurity?

One view on this is that machine learning, as a powerful technique that enables computer systems to take on tasks previously reserved only for humans, will empower cyberattackers to breach computer security in new ways, or at least in ways more effective than before. I know there is a great deal of anxiety about this. This past fall, I had a conversation with a CIO of a large university, who told me that his university was migrating its internet services to Amazon precisely because he believed that new AI-powered cyberattacks were coming, and he thought Amazon would be better able to fend them off. I'm not sure what I think of this defensive strategy, but that is not the important question here. The key question is this: are AI-powered cyberattacks going to overwhelm cyberdefence?

No doubt AI-powered cyberattacks are a reality. Machine learning is a powerful computer science technique, especially for automation. Cyberattackers, especially sophisticated, well-funded cyberattackers, will use it and I am confident are already using it. But highly automated cyberattacks are nothing new: cyberattackers have been automating their attacks for decades. Smarter automated cyberattacks are certainly something to worry about, but will they be transformative? Maybe. After all, in cybersecurity, the advantage is to the attacker, who needs to find only one hole in the defences, while the defender needs to block all of them. Anything that boosts the effectiveness of the attacker would seem to make the situation worse.

To really see the full picture, it's important to look at the defender too. Machine learning makes the situation worse only if it benefits the attacker more than it benefits the defender. But does it?

I don't have a complete answer to this question: there is a great deal of work still to be done on the application of machine learning to cybersecurity. But I suspect that the answer is a qualified No: rather, all other things being equal, machine learning will likely shift the balance of power towards the defender. The reason is data.

Machine learning is a technique where computer systems, instead of being programmed by programmers, learn what to do from data. But the quality of the learning depends on the quality and in particular the quantity of data. Machine learning is a technique that is most effective when trained with large amounts of data. ImageNet, for instance, a standard training dataset used to train machine learning applications to recognize images, contains about 14.2 million images. But who is more likely to have access to large amounts of good data about a system: the attacker or the defender? Of course, it depends, but it seems to me that, very generally speaking, the defender is more likely to have access to good system data than the attacker. The attacker is trying to get in; the defender is already in.

Of course, this is the broadest of generalizations. The effectiveness of machine learning in the cybersecurity space depends on a great many things. But I am cautiously optimistic. I realize I may be bucking what seems to be becoming a prevailing trend of ever-increasing anxiety about cybersecurity, but I believe here that machine learning has more potential to help than to harm. I look forward to seeing what will emerge in this space over the next few years.

Mon 30 Sep 2019 00:00

What's all the fuss about AI anyway?

AI, broadly understood, is a term used to describe a set of computing techniques that allow computers to do things that human beings use intelligence to do. This is not to say that the computer is intelligent, but rather that the computer is doing something that, if done by a person, would be considered evidence of that person's intelligence. Contrary to widespread opinion, this is not the same thing as an artificial person. In fact, there have been for a long time many things that humans use intelligence to do, that computers do better, whether it be remembering and recalling items, doing arithmetic, or playing chess. But computers do these things using different techniques than humans do. For example, Deep Blue, a custom chess computer built by IBM, beat Garry Kasparov, the then-reigning world chess champion, in 1997, but Deep Blue played chess in a very different way than Garry. Garry relied on his human intelligence, while Deep Blue used programming and data.

However, some computer scientists, noting that people can do things that computers can't, thought long and hard about ways that people do it, and how computers might be progammed to do the same. One such technique, deep learning, a neural network technique modelled after the human brain, has been worked on since the 1980s, with slow but steady improvement, but computer power was limited and error rates were often high, and for many years, most computer scientists seemed to feel that other techniques would yield better results. But a few kept at it, knowing that the computers of the day were inadequate, but advances in computing would make things possible that weren't possible before.

This all changed in 2012, when one such researcher, Geoff Hinton, and his students, working here at the University of Toronto, published a seminal deep learning paper that cut error rates dramatically. I remember supporting Geoff's group's research computing at that time. It was a bit challenging: we were using multiple GPUs per machine to train machine learning models at a time when GPU computing was still rather new and somewhat unreliable. But GPUs were absolutely necessary: without them, instead of days of computing time to train a model, months would be required. One of our staff, Relu Patrascu, a computer scientist and skilled system administrator working hand-in-glove with the researchers, tuned and configured and babysat those machines as if they were sick children. But it worked! Suddenly deep learning could produce results closer to what people could do, and that was only the beginning. Since then, deep learning has produced terrific results in all sorts of domains, some exceeding what people can do, and we've not even scraped the surface of what is possible.

But what does deep learning actually do? It is a computer science data classification technique. It's used to take input data and classify it: give it a thing and it will figure out what the thing is. But it classifies things in a way that's different and more useful than traditional computer science methods for classification, such as computer programming, or data storage and retrieval (databases). As such, it can be used to do a lot more than computers previously had been able to do.

To see this, consider traditional computer science methods: for example, computer programming. This approach requires a person to write code that explicitly considers different cases. For example, imagine that you want to classify two-dimensional figures. You want to consider whether they are regular polygons. You could write a computer program that defines for itself what a regular polygon is, and checks each characteristic of an input shape to see whether or not it matches the definition of a regular polygon. Such a program, when given a square, will notice that it is a polygon, it has four sides, and that those sides are equal in length. Since the programmer put into the program a detailed definition of what a regular polygon is, and since the program checks each feature explicitly, it can tell whether or not a shape is a regular polygon, even if the program has never seen that particular shape before.

But what about exceptional cases? Is a circle a regular polygon? It is, after all, the limit of an N-gon as N goes to infinity. This is an "edge case" and programs need to consider those explicitly. A programmer had to anticipate this case and write it into the program. Moreover, if you wanted to consider some other type of shape, a programmer would have to rewrite the code accordingly. There's no going from a bunch of examples to working code without a programmer to write it. Programming is certainly a useful technique, but it has its limits. Wouldn't it be nice to be able to learn from a bunch of examples, without a person having to write all that code?

One way to do that would be data storage and retrieval, for example, a database. Consider the shape classifier problem again. You might put in a bunch of shapes into a database, indicating whether the shape is a regular polygon or not. Once the database is populated, classifying a shape simply becomes looking it up. The database will say whether or not it is a regular polygon.

But what if it's not there? A database has the advantage of being able to learn from examples. But it has a big disadvantage: if it hasn't seen an example before, and is asked about it, it has no idea what the right answer is. So while data storage and retrieval is a very useful computing technique, and it is the backbone of most of our modern information systems, it has its limits. Wouldn't it be nice if a classifier system could provide a useful answer for input data that it's never seen before, without a programmer to tell it how?

Deep learning does exactly this. Like data storage and retrieval, it learns from examples, through training. Very roughly, a neural network, when trained, is given some input data, and is told what output data it should produce when it sees that data in future. These input and output constraints propagate forward and backwards through the network, and are used to modify internal values such that when the network next sees input like that, it will produce the matching output.

The key advantage of this technique is that if it sees data that is similar to, but not the same as data it has been trained on, it will produce output similar to the trained output. This is very important, because like programming, it can work on input it has never seen, but like databases, it can learn from examples and need not be coded by a programmer anticipating all the details in advance. For our shape example, if trained with many examples of regular polygons, the neural network will be able to figure out whether or not a given input is a regular polygon, and perhaps even more interestingly, it will be able to note that a circle is very like a regular polygon, even if it had never been trained on a circle.

Moreover, a deep learning neural network can learn from its own results. This is called reinforcement learning. This technique involves using a neural network to derive output data from some input data, the results are tested to see how well they work, and the neural network is retrained accordingly. This way a neural network can "learn from its own mistakes", training itself iteratively to classify better. For example, a model of a walking human, with some simple programming to teach it the laws of physics, can, using reinforcement learning, teach itself how to walk. A few years ago, some of the researchers in our department did exactly that. Another example: Google got a lot of attention a few years ago when deep learning researchers there built a deep learning system that used reinforcement learning to become a champion at the game of Go, a game very hard to computerize using traditional techniques, and proved it by beating the reigning Go world champion.

It seems clear to me at this point that deep learning is as fundamental a computing technique as computer programming and databases in building practical computer systems. It is enormously powerful, and is causing a great deal of legitimate excitement. Like all computer science techniques, it has its advantages and drawbacks, but its strengths are where other computer science techniques have weaknesses, and so it is changing computer science (and data science more generally) in dramatic ways. It's an interesting time to be a computer scientist, and I can't even begin to imagine the many things that bright and innovative people will be able to do with it in the future.

Mon 02 Sep 2019 20:14

Existential threats from AI?

Are they right? Is there an existential threat to humanity from AI? Well, yes, I think there actually is one, but not quite in the way Musk, Kissinger, or Hawking fear. Computer have been better at humans for a long time in many cognitive domains. Computers remember things more accurately, process things faster, and scale better than humans in many tasks. AI, particularly machine learning, increases the number of skills where computers are better than humans. Given that humanity has been spending the last couple of generations getting used to a certain arrangement where computers are good at some things and humans are good at others, it can be a bit disconcerting to have this upended by computers suddenly getting good at things they weren't good at before. I understand how this can make some people feel insecure, especially highly accomplished people who define themselves by their skills. Kissinger, Musk and Hawking fear a world in which computers are better at many things than humans. But we have been living in such a world for decades. AI simply broadens the set of skills in question.

As a computer scientist, I am not particularly worried about the notion of computers replacing people. Yes, computers are developing new useful skills, and it will take some getting used to. But I see no imminent danger of AI resulting in an artificial person, and even if it did, I don't think an artificial person is an intrinsic danger to humans. Yet I agree that there are real existential threats to humanity posed by AI. But these are not so much long term or philosophical, to me they're eminently practical and immediate.

The first threat is the same sort of threat as posed by nuclear physics: AI can be used to create weapons that can cause harm to people on a massive scale. Unlike nuclear bombs, AI weapons do not do their harm through sheer energy discharge. Rather, machine learning, coupled with advances in miniaturization and mass production, can be used to create horrific smart weapons that learn, swarms of lethal adaptive drones that seek out and destroy people relentlessly. A deep commitment to social responsibility, plus a healthy respect for the implications of such weapons, will be needed to offset this danger.

The second threat, perhaps even more serious, comes not from AI itself but from the perceptions it creates. AI's successes are transforming human work: because of machine learning, more and more jobs, even white-collar ones requiring substantial training, can be replaced by computers. It's unclear yet to what extent jobs eliminated by AI will be offset by new jobs created by AI, but if AI results in a widespread perception that most human workers are no longer needed, this perception may itself become an existential threat to humanity. The increasingly obvious fact of anthropogenic climate change has already fueled the idea that humanity itself can be viewed as an existential threat to the planet. If AI makes it possible for some to think that they can have the benefits of society without keeping many people around to do the work, I worry we may see serious consideration of ways to reduce the human population to much smaller numbers. This to me is a dangerous and deeply troubling idea, and I believe a genuine appreciation for the intrinsic value of all human beings, not just those who are useful at the moment, will be needed to forestall it. Moreover, a good argument from future utility can also be made: we cannot accurately predict which humans will be the great inventors and major contributors of the future, the very people we need to address anthropogenic climate change and many other challenges. If we value all people, and build a social environment in which everyone can flourish, many innovators of the future will emerge, even from unexpected quarters.

Threats notwithstanding, I don't think AI or machine learning can go back into Pandora's box, and as a computer scientist who has been providing computing support for machine learning since long before it became popular, I would not want it to. AI is a powerful tool, and like all powerful tools, it can be used for many good things. Let us build a world together in which it is used for good, not harm.

Fri 19 Jul 2019 16:13

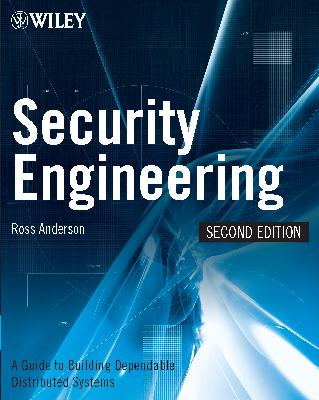

Ross Anderson's Security Engineering