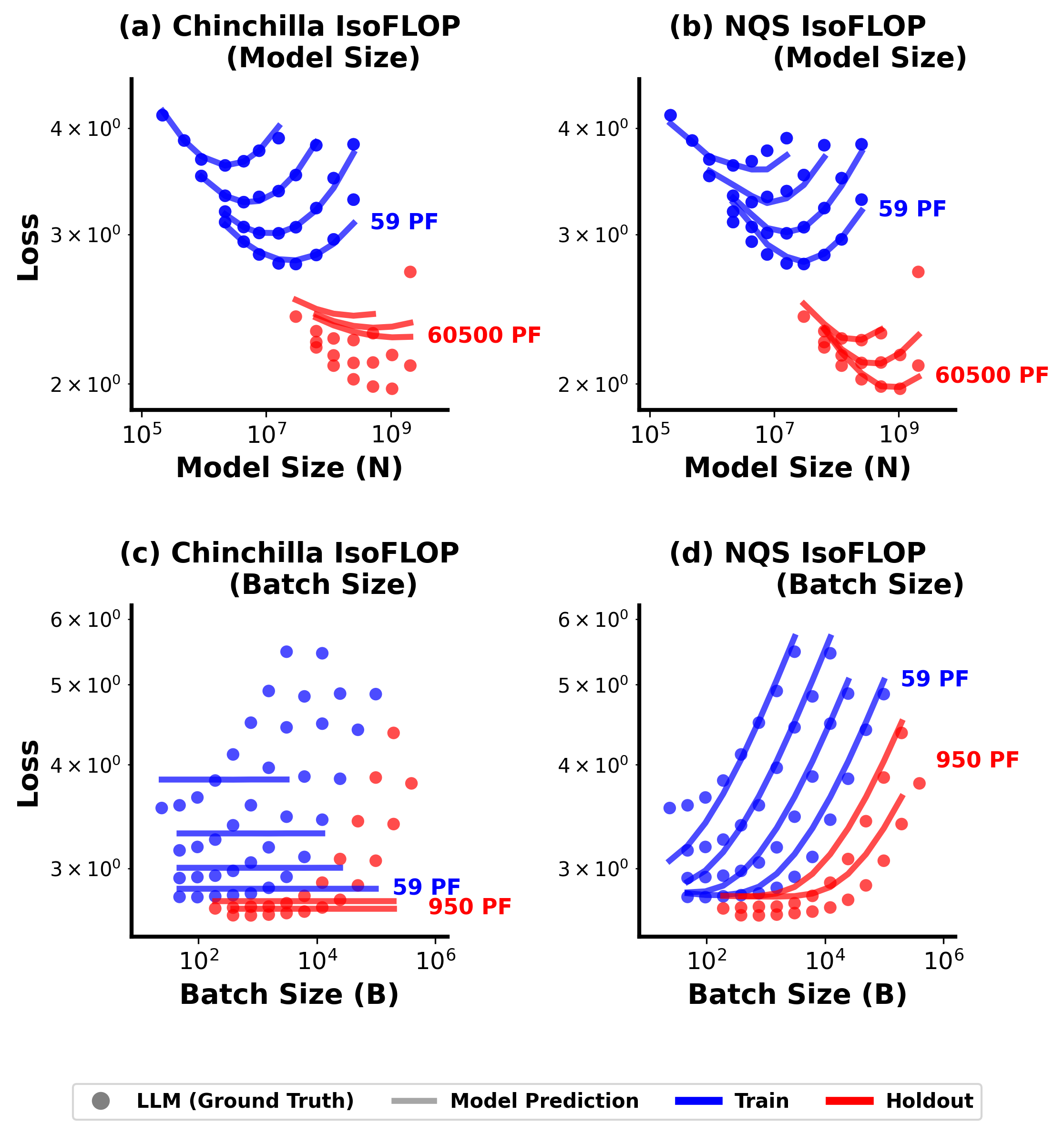

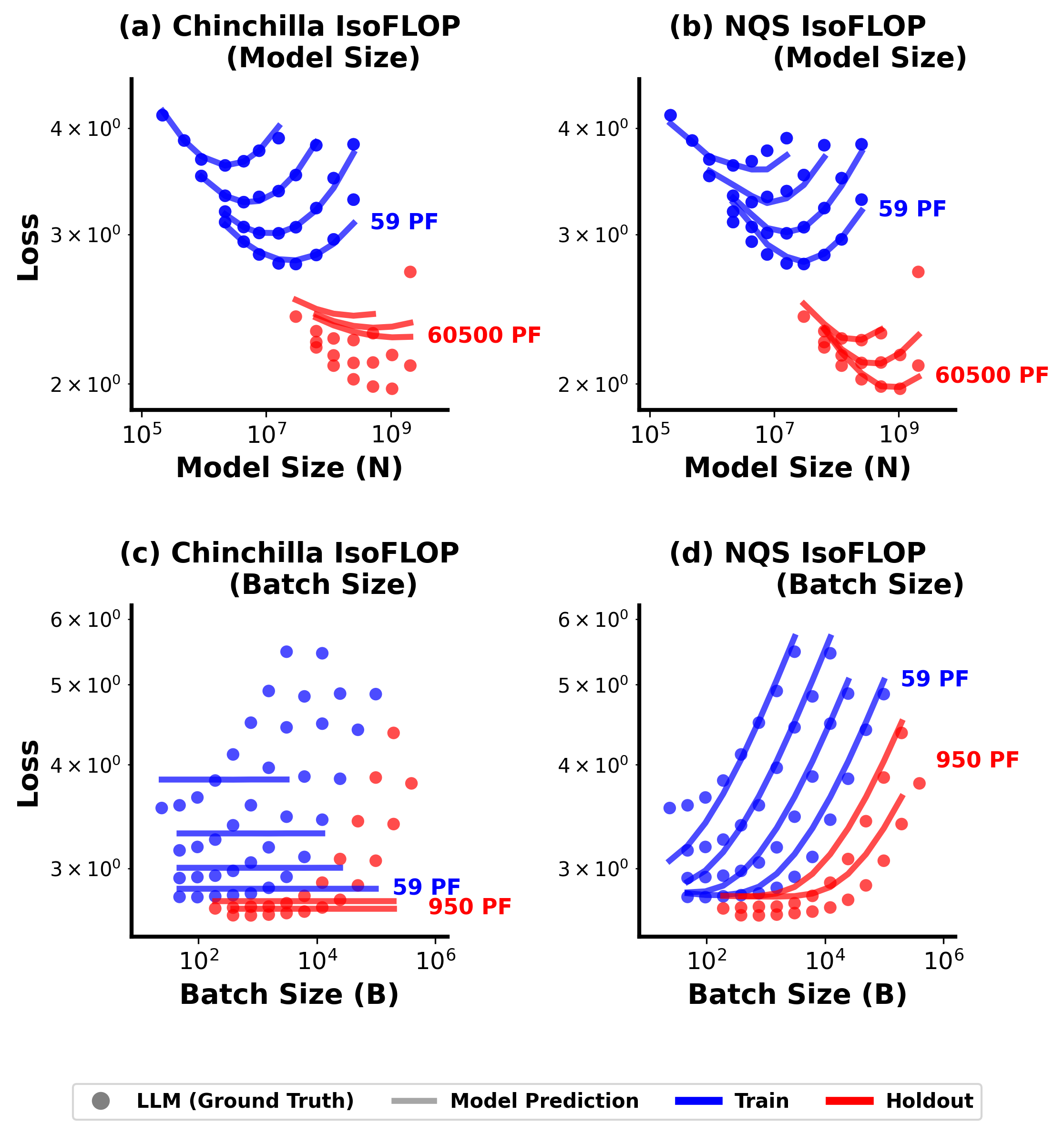

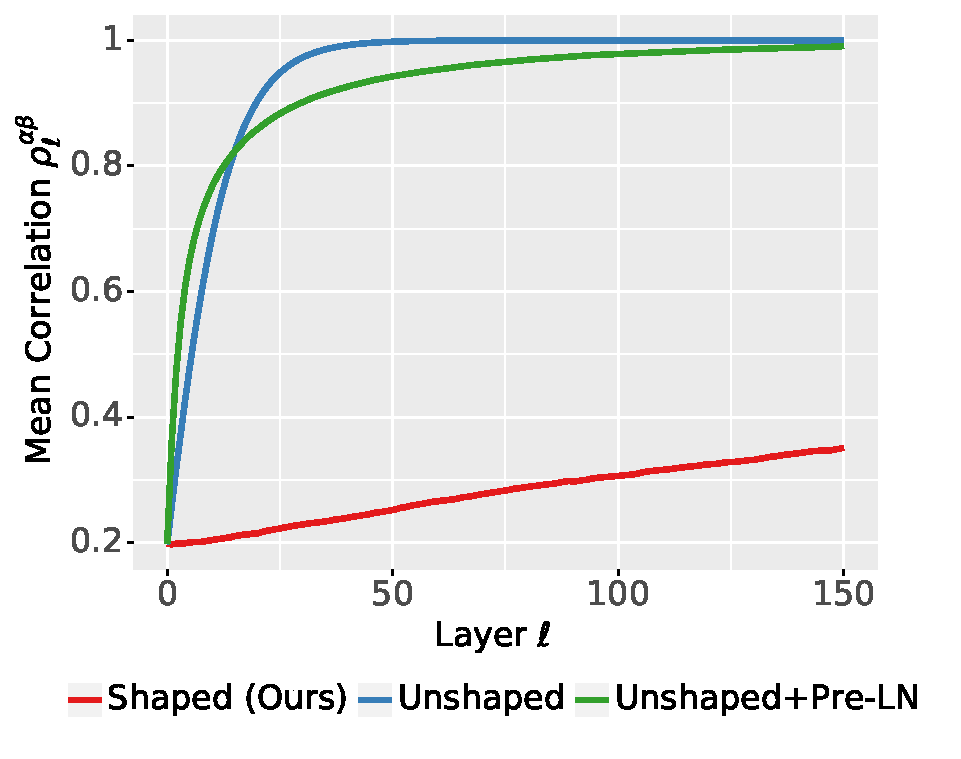

Predicting Large Model Test Losses with a Noisy Quadratic System

International Conference on Machine Learning (ICML), 2026.

I am a PhD student in Computer Science at the University of Toronto and the Vector Institute, advised by Prof. Chris J. Maddison.

I am interested in understanding the training dynamics of neural networks, especially that of Large Language Models (LLMs). My goal is to develop a scientific model of LLM training that is both faithful and simple, so that it is broadly accessible while still practically useful.

As a first step, my advisor Chris and I developed a linear-regression-like surrogate model that quantitatively captures LLM training behaviour. The model reliably predicts how LLM performance responds to changes in training configurations. A fully implemented version of this model is available in the GitHub repository Predicting Large Model Test Losses with a Noisy Quadratic System.

International Conference on Machine Learning (ICML), 2026.

Advances in Neural Information Processing Systems (NeurIPS), 2023. *Equal contribution.